Enterprise AI Architecture: How We Connect 10+ Systems Without Breaking Anything

Enterprise AI integration is no longer about bolting a chatbot onto a legacy stack. It is about system architecture that lets autonomous agents plan, code, review, and ship — across Jira, GitHub, CI/CD, cloud runtimes, and multiple production apps — without a human babysitting every step. In this article I'll walk through the exact architecture we run at EGO Digital to connect 10+ systems, the automation loop that replaced our project managers' and mid-level engineers' routine work, and the lessons from putting it into production on four concurrent products.

The Problem: The Hidden Cost of "Just Add Another Integration"

Most engineering organizations look stable from the outside and chaotic from the inside. A typical mid-sized product has:

- A Jira (or Linear) workspace for planning.

- One or more Git repositories with branch protection and required reviews.

- A CI pipeline (GitHub Actions, GitLab CI, or similar).

- A staging and production environment, often on different clouds.

- A knowledge base, architecture docs, and coding rules scattered across Notion, Confluence, and AGENTS.md files.

- An LLM API, a vector store, observability tools, and a handful of internal services.

Every new integration adds a combinatorial number of failure modes. A naïve "AI pull request bot" that ignores repository conventions will happily rewrite your folder structure, break your design system, or merge code that passes tests but violates your domain rules. This is the real reason enterprise AI integration projects stall: the AI is the easy part; the architecture around the AI is what determines whether you ship or regress.

The question we had to answer was simple:Can we route work from idea to production through 10+ systems, with AI agents doing the execution, and never lose architectural integrity?

The Solution: A Ticket-Driven, Agent-Native Delivery Loop

Our answer is an event-driven orchestration layer where a ticket's status is the single source of truth, and cloud AI agents are first-class workers in the pipeline — peers to humans, not tools used by them.

The contract is deliberately boring, which is what makes it robust:

- Jira is the control plane. A status transition is a signal. No chat commands, no magic buttons.

- The repository is the knowledge base. Architecture rules live in AGENTS.md files at the app level (api/AGENTS.md, client-app/AGENTS.md, admin-app/AGENTS.md in our monorepo). Agents read them before they think.

- GitHub Actions is the delivery plane. Tests, builds, and deploys are code, reviewed like any other code.

- Cloud AI agents are the workers. They are stateless between tasks; all context lives in Jira + Git + the rules files.

The pipeline, end to end

Here is the exact loop we run in production:

1. Task intake (Jira → Planner Agent).

A task is created in Jira with a plain-language description. A webhook notifies our automation layer. The Planner Agent clones the relevant repo, reads AGENTS.md and related modules, and produces an implementation plan: files to touch, risks, open questions. The plan and questions are written back into the Jira ticket body, and the status moves Backlog → To Do.

2. Human review (the only required human step).

I read the plan. If I'm happy, I drag the card To Do → In Progress. If the plan needs more thought, I drag it back To Do → Backlog, optionally adding a comment, and the Planner Agent re-plans with the new context. This is the exception-native design principle: humans intervene only when the agent's plan is not good enough, not on every ticket.

3. Implementation (In Progress → Implementer Agent).

The status change triggers the Implementer Agent. It creates a feature branch, implements the approved plan, runs local checks, and opens a Pull Request on GitHub with the Jira key in the title and a summary in the body.

4. CI and auto-deploy (GitHub Actions).

The PR triggers the standard pipeline: lint, type-check, unit tests, integration tests. On green + merge to develop, GitHub Actions auto-deploys to dev. After smoke tests pass and the PR is promoted to main, it auto-deploys to prod.

5. Closure.

Deployment events call back to Jira, moving the ticket to Done with the deploy SHA and environment URLs attached.

Why this works where other "AI coders" fail

- Status-as-contract. There are only four meaningful transitions (Backlog → To Do, To Do → Backlog, To Do → In Progress, PR merged → Done). Agents cannot improvise outside them.

- Plan before code. Separating the Planner and Implementer agents means the expensive, high-context step (reading the repo and understanding architecture) happens before any branch is created. 90% of bad PRs are prevented at the planning stage, not caught in review.

- Rules live next to code. Each app has its own AGENTS.md with the architectural contract: allowed dependencies, folder layout, domain boundaries. When the rules change, they change in the repo, via PR — so the agents' behavior is versioned alongside the product.

- No shared mutable state between agents. Every agent run starts from a clean clone. Context is rebuilt from Jira + Git + rules every time. This eliminates the "my agent remembers something wrong from last Tuesday" class of bugs.

A Real Example: Running This on Four Products in Parallel

We are not describing a lab experiment. This exact architecture runs today across four independent products:

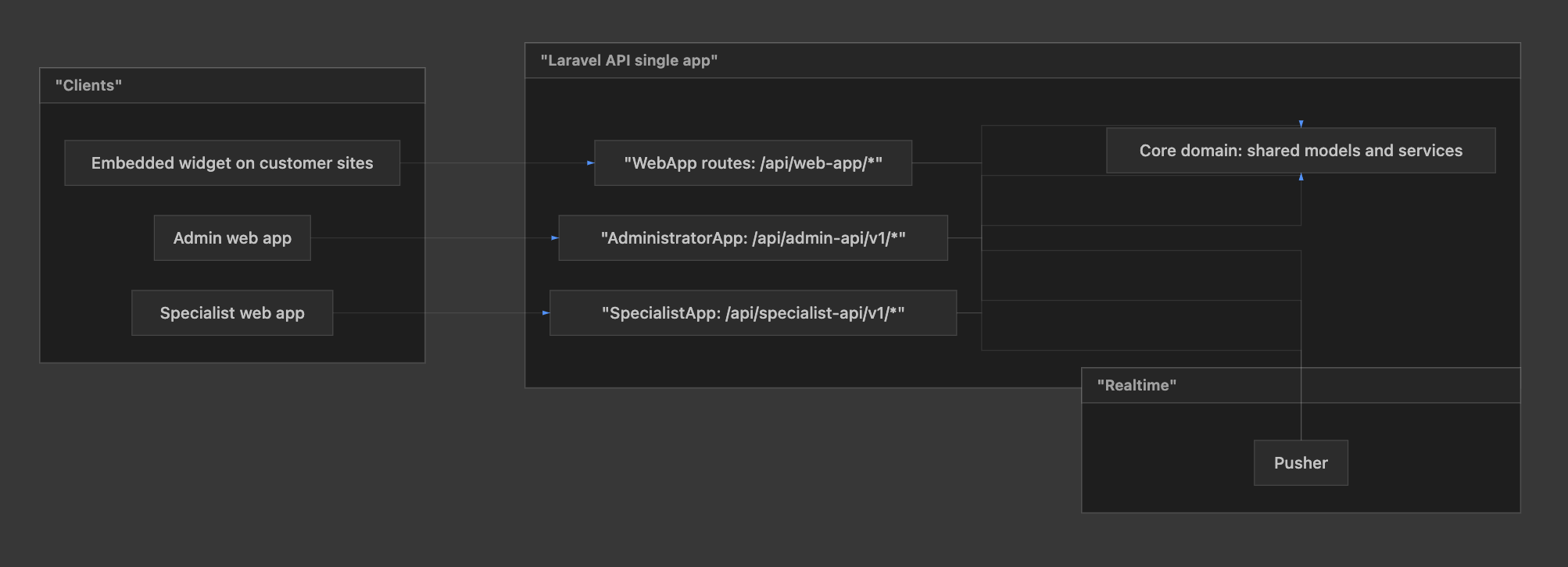

Mashu AI — our multi-app conversational platform (widget, specialist, admin, API).

- Third-party websites load the embeddable widget (widget.js). The widget talks only to the WebApp HTTP API (/api/web-app/*), uses cookies for session.

- Admin app is the back office; it uses AdministratorApp API (/api/admin-api/v1/*).

- Specialist app is the practitioner dashboard; it uses SpecialistApp API (/api/specialist-api/v1/*) with Bearer token.

- All three APIs are one Laravel application: shared Core domain (data, services), separate route prefixes per client.

- Pusher is used for realtime events where the product needs live updates.

EGO Digital — our company website and marketing platform.

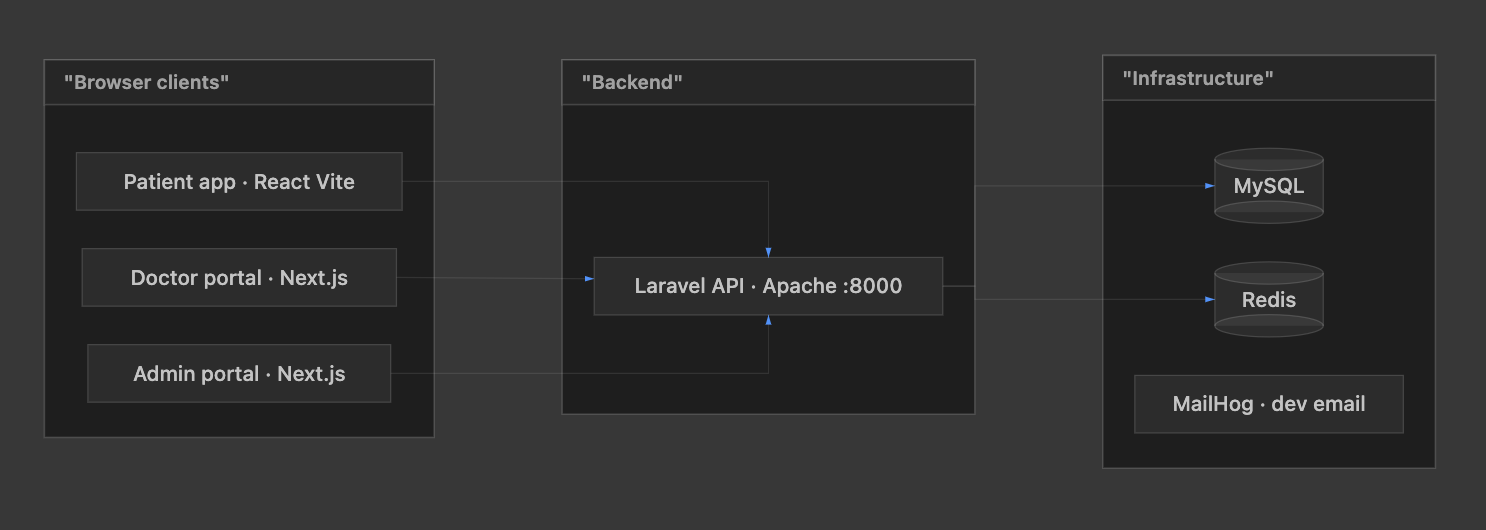

NeuroLab — our healthcare AI orchestration product, where the patient / doctor / admin / bot channels must all stay aligned around one clinical source of truth.

NeuroLab platform has three browser clients: a patient app (React / Vite), a doctor portal, and an admin portal (both Next.js). They talk to one Laravel HTTP API (often exposed via Apache on port 8000). The API uses MySQL for persistent data, Redis for caching/sessions/queues as configured, and in local Docker stacks MailHog can capture outbound mail (e.g. password reset).

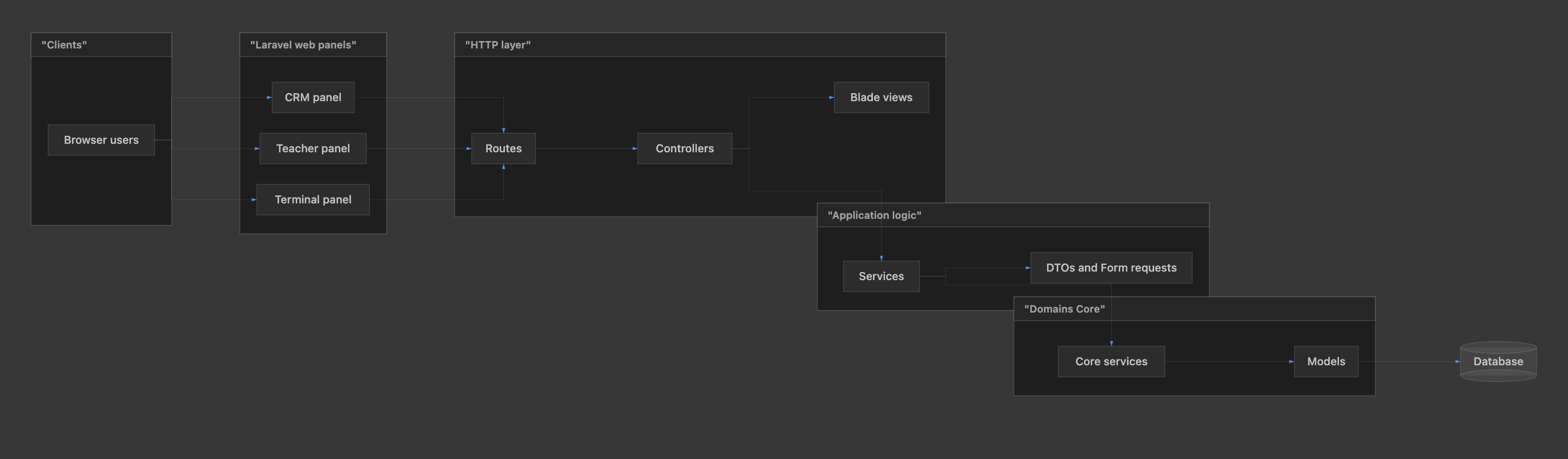

Bruner CRM — a client-facing CRM product with its own deploy cadence.

- Users open a browser and hit one of three web panels (CRM, Teacher, Terminal).

- Each panel has its own routes, controllers, Blade views, and HTTP requests / DTOs.

- Controllers stay thin; they call services.

- Domains/Core holds models, migrations, and core services — shared domain logic and data access patterns.

- Database stores application data.

- CI/CD (e.g. push to dev) builds the app and deploys to your server over SSH.

Concretely, on the Mashu AI monorepo, a single day might look like this:

- Ticket MASHU-312: "Add rate-limit headers to the widget's chat endpoint." Planner reads api/AGENTS.md, identifies the middleware layer, writes a 6-step plan, asks one clarifying question about the 429 payload shape. I answer in the ticket, approve, drag to In Progress. Implementer opens a PR within minutes, CI passes, auto-deploy to dev, I sanity-check, promote, prod deploy, ticket closed.

- In parallel, ticket NEURO-88 ("Log chat-bot handoff events to the analytics pipeline") is being planned against the NeuroLab repo by a different cloud agent run. Same contract, different repo, different rules.

- And a marketing copy ticket for ego-digital.com is implementing a blog component change through the same loop.

The result is that one person (me) can steer four products concurrently, because my job has shifted from writing code to approving plans and setting architectural direction. No additional headcount was needed to support this volume.

Measurable outcomes across the four products

- Cycle time from ticket creation to prod deploy dropped from days to hours for standard tasks.

- Planning quality is higher than when humans did it in a hurry — plans are explicit, written, and reviewed.

- Architectural drift (a new folder nobody approved, a stray dependency, a skipped test) is effectively zero, because rules are read before every change.

- Maintenance overhead on the projects themselves — the "keep the lights on" work — is now almost entirely handled by cloud agents.

The Result: Orchestration, Not Automation

The difference between this and classic CI/CD automation is worth stating plainly. Traditional automation executes a fixed script when a trigger fires. What we've built is orchestration: the agents decide what to do within an architectural contract, then the automation layer executes it deterministically and reversibly.

That is the real unlock of enterprise AI integration. It is not "AI writes code." It is: work flows from intent to production through well-defined transitions, and AI agents do the heavy lifting at the right points without ever being the system of record.

If you are running a growing product and your team is drowning in glue work — planning tickets, writing boilerplate PRs, coordinating deploys across repos — the architecture above is not science fiction. It is in production today on four of our products, and it can be adapted to yours.

If you'd like to see this architecture applied to your own stack — from Jira (or Linear) intake through to multi-environment auto-deploy — start here: AI-native Workflow Automation at EGO Digital. We'll map your current delivery flow, identify the transitions worth automating, and design an agent-native pipeline that fits your architecture rather than fighting it.

— Andrei, AI Systems Architect, EGO Digital

Do you have any questions about AI Orchestration & Multi-Agent Systems?

Ask Andrei Tereshin – AI Systems Architect!

Recent Articles

LLM Orchestration in Production: The Engineering Realities No Framework Prepares You For

Most teams shipping their first AI agent discover the same uncomfortable truth: the demo that wowed everyone in the all-hands meeting falls apart the moment real users touch it. LLM orchestration in production is not a harder version of prototyping — it is a fundamentally different discipline.

Integrating LLM Responses into Real-Time UX: Performance Patterns

LLM integration in a real-time UI is no longer just a technical milestone — it is a product expectation. In modern frontend AI experiences, users do not judge quality only by the intelligence of responses. They judge by how quickly the interface reacts, how stable the interaction feels, and whether communication stays clear under uncertainty.This matters in every AI-powered product, but it becomes especially critical in emotionally sensitive contexts where interface behavior and message quality directly affect trust. The key lesson: model performance alone does not create a strong user experience. Real-time UX does.

Managing AI Development Projects: Timelines, Risks, and What's Different

Imagine you are three weeks away from a major product launch. The frontend is sleek, the APIs are lightning-fast, and the stakeholders are already popping champagne. But at the center of your architecture sits a "Black Box"—a machine learning model that worked perfectly in the lab but is currently returning 40% accuracy on real-world data.

THE FUTURE IS AI-NATIVE.

LET'S BUILD IT WITH YOU.

Partner with us to design and deploy AI-native systems.