Integrating LLM Responses into Real-Time UX: Performance Patterns

LLM integration in a real-time UI is no longer just a technical milestone — it is a product expectation. In modern frontend AI experiences, users do not judge quality only by the intelligence of responses. They judge by how quickly the interface reacts, how stable the interaction feels, and whether communication stays clear under uncertainty.This matters in every AI-powered product, but it becomes especially critical in emotionally sensitive contexts where interface behavior and message quality directly affect trust. The key lesson: model performance alone does not create a strong user experience. Real-time UX does.

Problem: Why LLM Integration Needs a Deliberate Real-Time UI in User-Facing Frontend AI

Many teams successfully integrate LLMs and still struggle with adoption. The reason is simple: orchestration and model quality can be strong, while the live user experience still feels inconsistent.Typical symptoms include:

- A noticeable pause after users send a message.

- Unstable streaming that causes text jumps and visual noise.

- Vague loading states that leave users unsure what is happening.

- Errors that are technically accurate but emotionally unhelpful.

- Session behavior that feels different between environments.

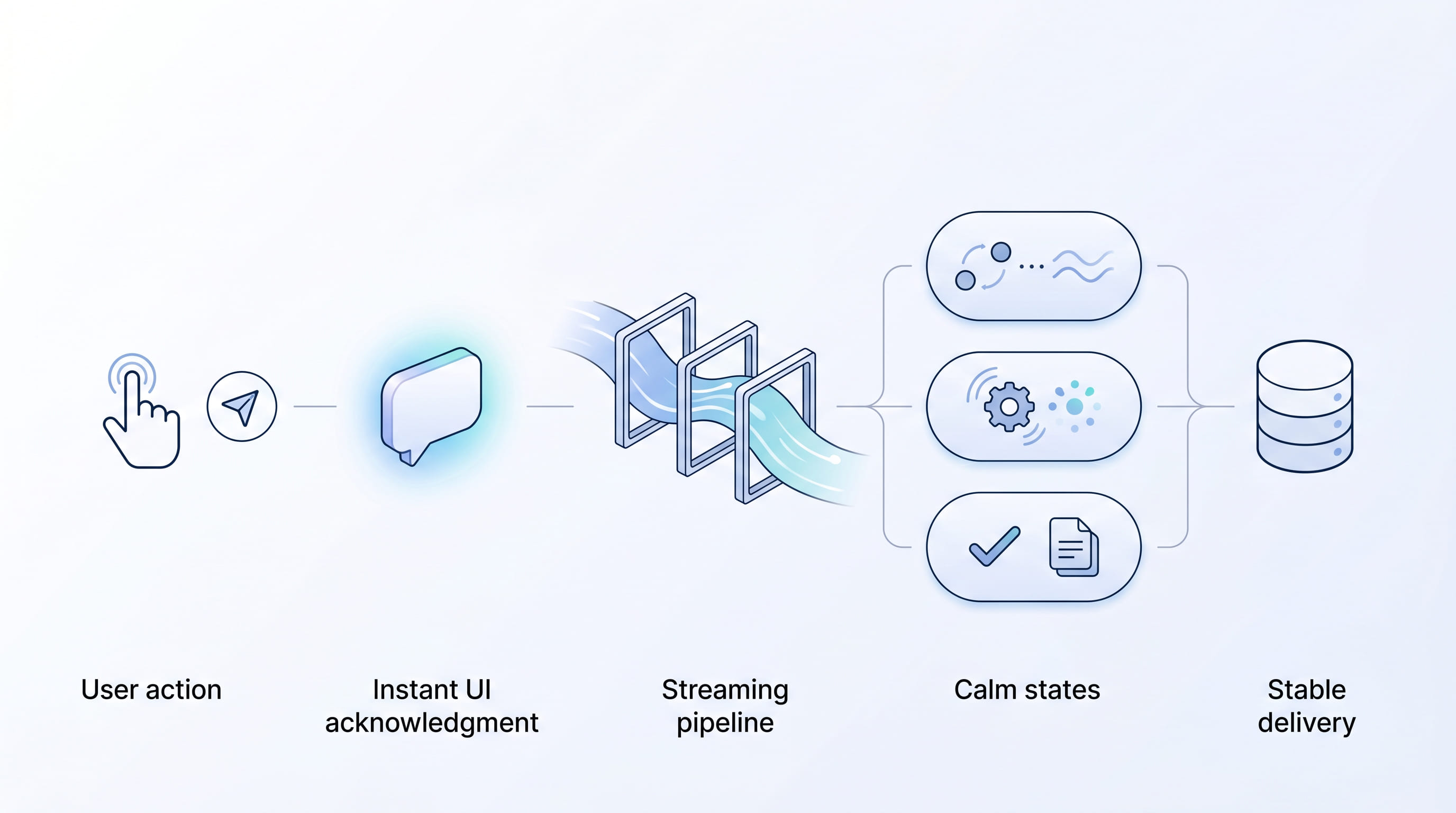

Solution: Real-Time UX Architecture for LLM Responses (Acknowledgment, Streaming, States)

To make LLM-powered interactions feel truly real-time, teams need a layered approach that combines backend orchestration with frontend delivery discipline.

1. Instant acknowledgment pattern

The UI should confirm user action immediately, before full generation completes.

- Render the user message instantly.

- Show a clear assistant “in progress” state.

- Communicate status progression in plain language.

This reduces perceived waiting time and reassures users that the system is responsive.

2. Controlled streaming, not raw streaming

Streaming should improve clarity, not create motion noise.

- Group token updates into small, readable intervals.

- Keep message layout stable while content grows.

- Avoid excessive jumps in scroll behavior.

The goal is smooth reading continuity, especially for longer or sensitive responses.

3. Message-first UX design

In real-time UI patterns for frontend AI, wording is part of performance.

- Use supportive microcopy for wait states and retries.

- Make error states actionable and non-alarming.

- Keep transitions predictable across all message states.

When communication is clear, users feel in control even during delays.

4. Operational consistency across environments

Perceived speed often breaks due to delivery differences, not model differences.

- Separate static and dynamic routes cleanly.

- Apply strong cache strategy for static assets.

- Keep configuration predictable across staging and production.

Consistency reduces hidden friction and protects user trust at scale.

5. Product-specific enhancements beyond orchestration

Enterprise orchestration platforms provide critical foundations, but user experience outcomes depend on additional product-level decisions.

- Add domain-aware response handling.

- Tune interaction states for your audience expectations.

- Build graceful fallback paths for uncertain model behavior.

Real Example: LLM Integration in Momentum — Supportive Real-Time UI and Frontend AI for IDF Soldiers, Families, and Chat

Momentum is a support platform for IDF soldiers and their families. It is a practical frontend AI case where LLM integration must feel steady and humane: people need immediate acknowledgment, calm communication, and predictable flow — not a cold, technical chat.Momentum used IBM orchestration as a robust foundation for managing LLM workflows. To meet real user needs, however, the team implemented additional improvements on top of that foundation:

- Faster perceived response start through immediate UI acknowledgment.

- Smoother streaming behavior to reduce visual stress.

- Clearer message states with warm, supportive microcopy — not cold system updates — so users feel heard, understand progress, and sense genuine intent to help.

- More resilient error and retry communication for sensitive conversations.

- Frontend and delivery optimizations to keep behavior stable in production.

The takeaway is clear: IBM orchestration enabled reliable workflow coordination, but product success required deliberate real-time UI design and messaging — beyond baseline orchestration alone.

Result: How Real-Time UX Patterns Improve Trust and Adoption in LLM-Powered Support

By applying these performance patterns, teams typically see improvements that matter to both users and stakeholders:

- Higher trust in AI interactions.

- Better completion rates in chat journeys.

- Lower drop-off during the initial response wait.

- More consistent product perception across environments.

- Stronger alignment between technical capability and user expectations.

In high-sensitivity scenarios — such as support for service members and families — these gains are even more valuable, because interaction quality is part of service quality.

— Daria Boiko, Agentic Solutions Engineer

If your team is planning LLM integration for customer-facing products, prioritize the full real-time UI experience — not just model output speed. The strongest outcomes come from combining orchestration reliability with intentional frontend AI UX and messaging design.Explore how we build production-grade AI experiences: EGO Digital.

Recent Articles

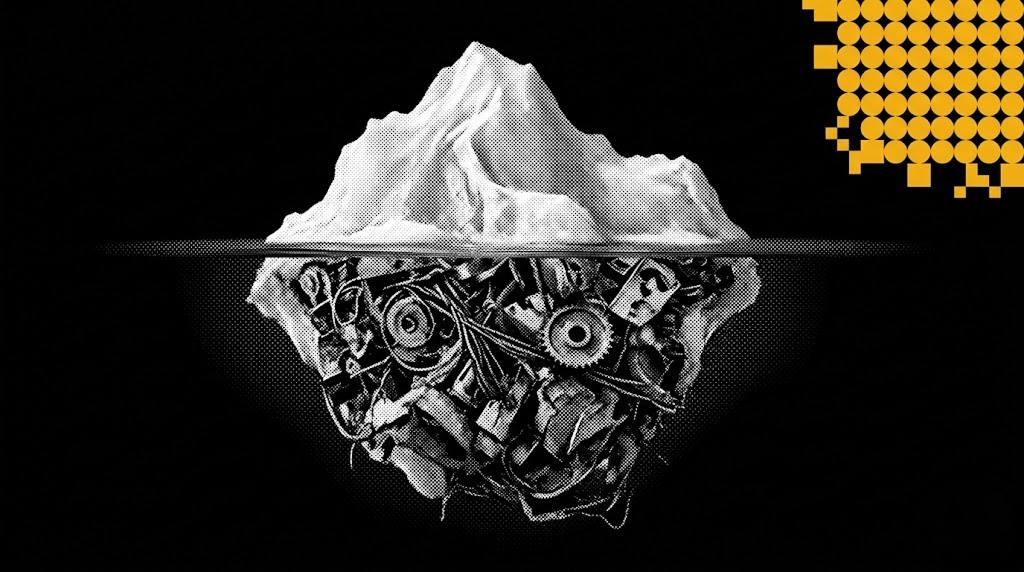

LLM Orchestration in Production: The Engineering Realities No Framework Prepares You For

Most teams shipping their first AI agent discover the same uncomfortable truth: the demo that wowed everyone in the all-hands meeting falls apart the moment real users touch it. LLM orchestration in production is not a harder version of prototyping — it is a fundamentally different discipline.

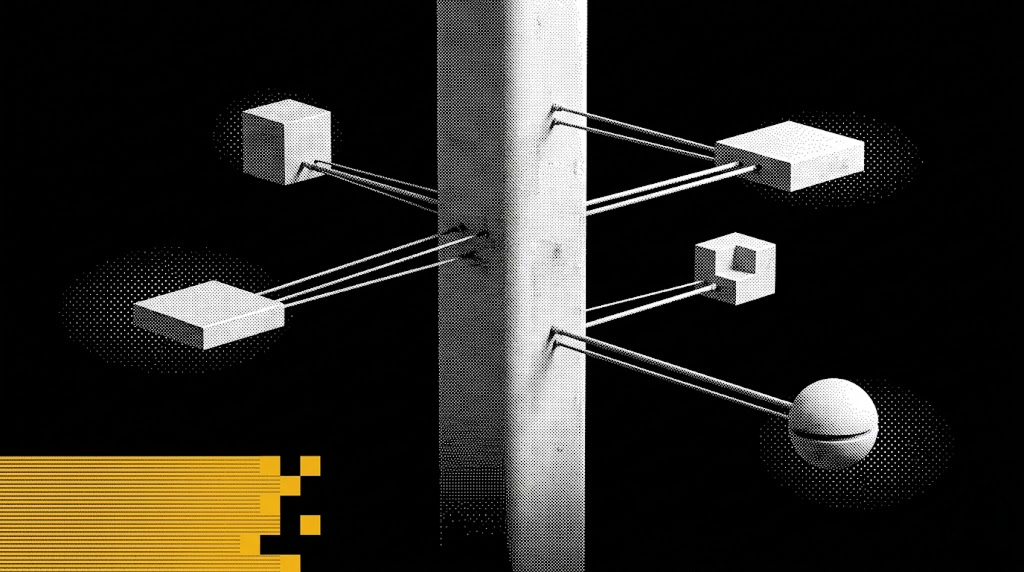

Enterprise AI Architecture: How We Connect 10+ Systems Without Breaking Anything

Enterprise AI integration is no longer about bolting a chatbot onto a legacy stack. It is about system architecture that lets autonomous agents plan, code, review, and ship — across Jira, GitHub, CI/CD, cloud runtimes, and multiple production apps — without a human babysitting every step. In this article I'll walk through the exact architecture we run at EGO Digital to connect 10+ systems, the automation loop that replaced our project managers' and mid-level engineers' routine work, and the lessons from putting it into production on four concurrent products.

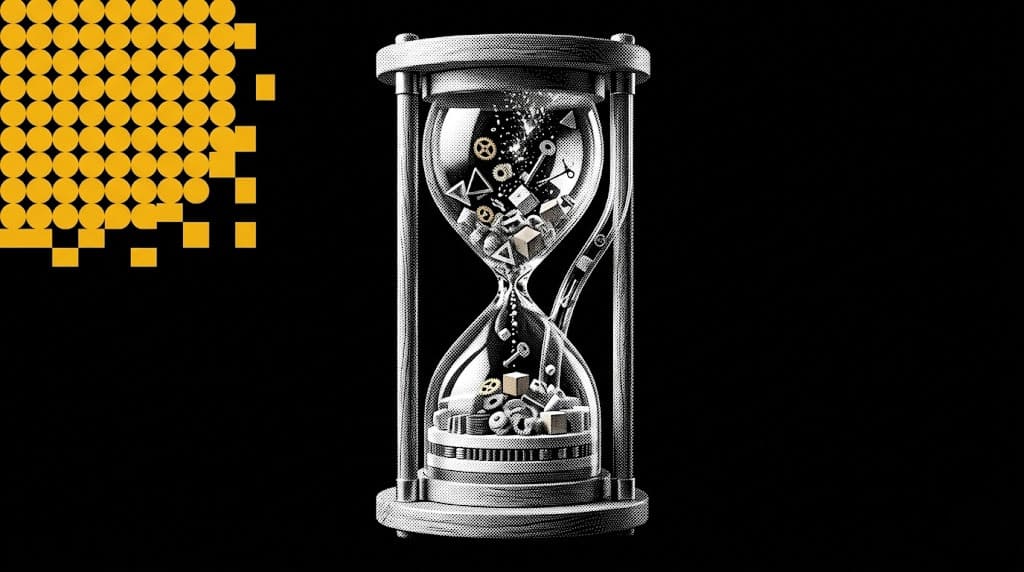

Managing AI Development Projects: Timelines, Risks, and What's Different

Imagine you are three weeks away from a major product launch. The frontend is sleek, the APIs are lightning-fast, and the stakeholders are already popping champagne. But at the center of your architecture sits a "Black Box"—a machine learning model that worked perfectly in the lab but is currently returning 40% accuracy on real-world data.

THE FUTURE IS AI-NATIVE.

LET'S BUILD IT WITH YOU.

Partner with us to design and deploy AI-native systems.