LLM Orchestration in Production: The Engineering Realities No Framework Prepares You For

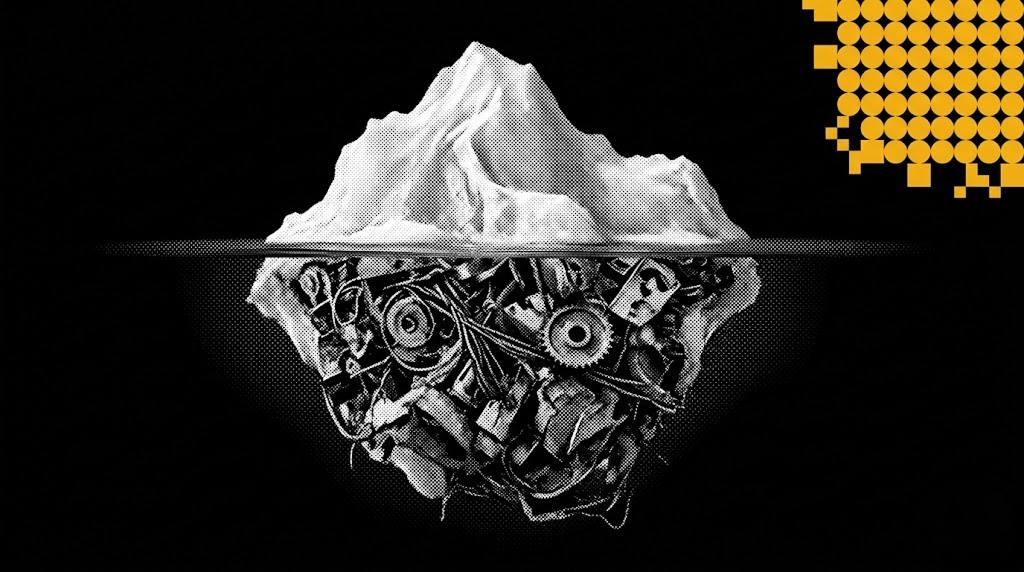

Most teams shipping their first AI agent discover the same uncomfortable truth: the demo that wowed everyone in the all-hands meeting falls apart the moment real users touch it. LLM orchestration in production is not a harder version of prototyping — it is a fundamentally different discipline.

The frameworks are loud about how easy it is to chain a few prompts together. They are quiet about what happens at 2 a.m. when a model provider degrades, a tool call times out mid-loop, and your agent has spent $40 retrying the same broken JSON parse. At EGO Digital, we have built and rebuilt enough production AI systems to know exactly what that quiet part sounds like. This article is about that.

The Problem: The Gap Between Demo and Production

A working prototype of a multi-step agent tells you almost nothing about whether it will survive production. Three forces conspire against you the moment you ship.

Latency compounds. A single LLM call at p50 of 1.2 seconds feels instant. Chain six of them with tool calls in between and you are looking at a 15-second response on a good day, 45 seconds on a bad one. Users do not wait 45 seconds. They refresh, retry, and double your traffic.

Failure modes multiply. Every node in your production AI pipeline can fail independently: the model returns malformed JSON, the vector store times out, a tool call hits a rate limit, a downstream API returns a 502, the context window overflows because a retrieved document was larger than expected. In a six-step agent, you are not dealing with one failure mode — you are dealing with the Cartesian product of all of them.

Costs are non-linear. Retries, fallback models, expanded context on second attempts, and silent infinite loops can turn a $0.04 expected cost per request into a $4 actual cost. We have seen a single misconfigured AI agent burn through a month's budget in 11 hours.

Most orchestration tutorials show you the happy path. Production is the unhappy path — with telemetry attached, if you are lucky.

The Solution: Treat Orchestration as a Distributed Systems Problem

Our guiding principle at EGO Digital is Clarity Before Code. Before writing a single line of agent logic, we map the failure surface, define budget boundaries, and establish observability contracts. This discipline — applied across every AI engagement we run through the Mashu AI orchestration layer — is what separates systems that hold up under load from systems that get quietly switched off after the first production incident.

Once you start thinking of an agent as a distributed system with non-deterministic nodes rather than a clever script, the playbook becomes familiar.

Make every step idempotent and resumable. If your agent fails on step 4 of 6, you should not have to re-run steps 1–3. We persist intermediate state — the plan, the tool results, the partial reasoning — to a durable store keyed by a request ID. On retry, the orchestrator picks up where it left off. This single architectural decision cut our average cost-per-failed-request by roughly 70%.

Bound everything. Every loop gets a maximum iteration count. Every tool call gets a timeout. Every total request gets a budget cap in both tokens and dollars. When a budget is hit, the agent exits gracefully with a partial result and a clear error — not a 500. This is not just good engineering; for enterprise deployments with compliance obligations, it is a hard requirement.

Route to the cheapest model that works at each step. Model routing is the most underrated cost lever in LLM orchestration. Classification and extraction tasks rarely need a frontier model. We typically run a lightweight model for intent detection and structured extraction, then escalate to a more capable model only for the synthesis step where reasoning quality actually matters. On one recent workflow, this routing strategy reduced cost by 62% with no measurable quality drop on our evaluation set.

Validate structured output at the boundary. Do not trust the model to return clean JSON. Use a schema validator — Pydantic, Zod, or JSON Schema — with a retry-on-failure loop bounded to two attempts. If both fail, fall back to a deterministic parser or return a structured error. Never let malformed output propagate downstream; it will surface as a confusing bug three steps later, and in regulated industries like fintech or healthcare, it can surface as a compliance incident.

Instrument like you mean it. Every span needs: request ID, step name, model used, input tokens, output tokens, latency, cost, and outcome. Without this, debugging a misbehaving agent is archaeology. With it, you can answer "why did this request cost $2.30?" in under a minute. Mashu AI ships with this observability layer built in — structured traces, cost accounting, and anomaly alerts — so teams are not building telemetry from scratch on top of every new workflow.

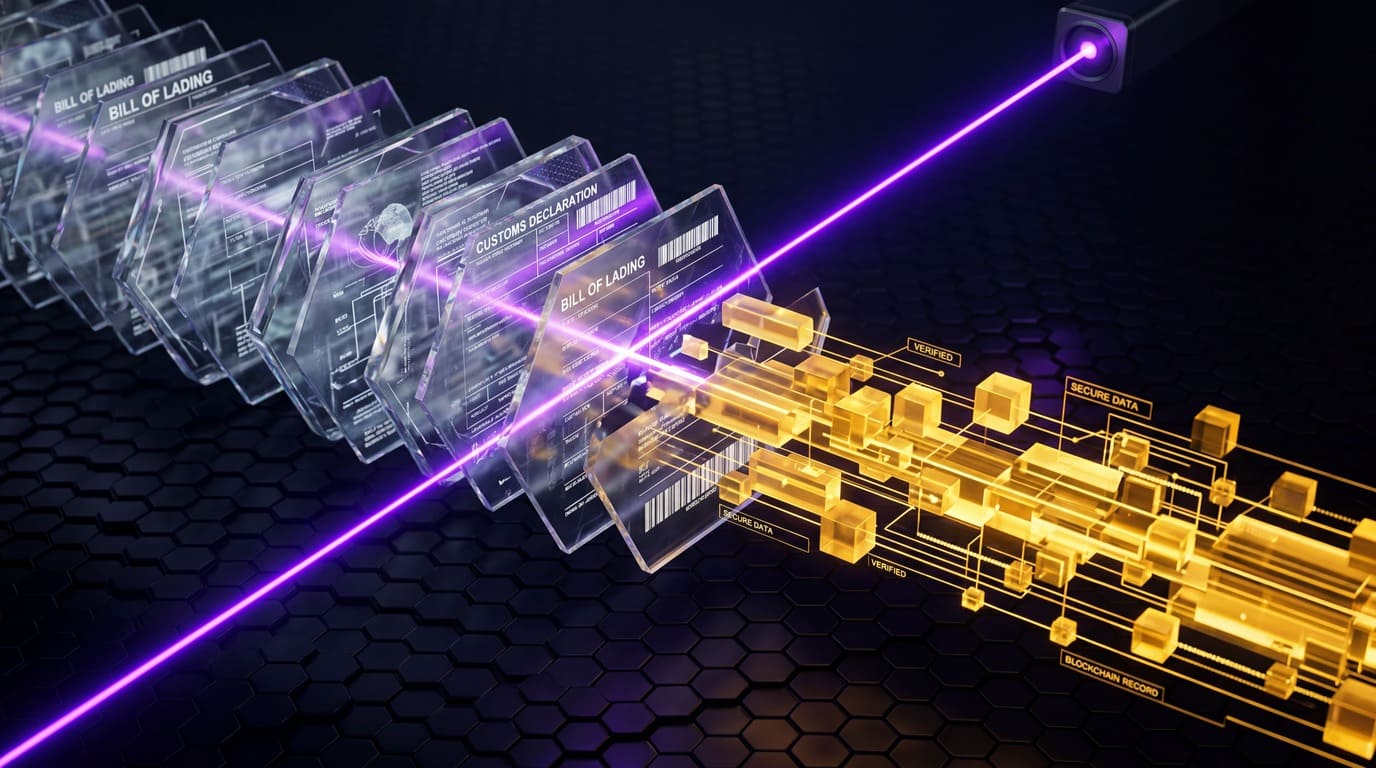

A Real Example: The Logistics Document Agent

We recently built a multi-agent system for a logistics client that ingests shipping documents — bills of lading, customs forms, packing lists — and reconciles them against the client's internal order system. Sounds like a classic OCR-plus-LLM pipeline. It was not.

The first version was a monolithic agent with five tools: extract text, classify document type, parse fields, look up the order, write the reconciliation result. It worked in the demo. In production, it failed on roughly one in four documents. The failures clustered around three issues: documents in mixed languages broke the classifier, fields appeared in wildly inconsistent positions across carriers, and the agent would occasionally loop forever trying to reconcile a partial match.

The rebuild followed every principle outlined above. We decomposed the monolithic agent into three specialized agents — an extractor, a classifier, and a reconciler — each with its own model tier, timeout budget, and output schema. The orchestration layer running on Mashu AI managed state handoffs between agents, enforced iteration limits on the reconciler, and surfaced partial failures with enough context for a human reviewer to step in. Document processing failure rate dropped from 25% to under 3%. Average cost per document dropped by 58%.

The architecture was not clever. It was disciplined.

Enterprise-Grade Orchestration Is Not Optional

For CTOs and engineering leaders building AI into core workflows, the question is no longer whether to use LLM orchestration — it is whether your orchestration layer is robust enough to carry production load, satisfy compliance requirements, and scale without becoming a liability.

At EGO Digital, we have been building and operating production AI systems since 2011, and our IBM Platinum Partnership gives us access to enterprise-grade infrastructure and security controls that most AI vendors cannot match. Whether you are in logistics, fintech, healthcare, or e-commerce, the engineering principles are the same — only the compliance surface changes.

The systems that last are the ones built on evidence, not intuition. On bounded, observable, resumable pipelines — not hopeful scripts.

Ready to move from fragmented AI tools to an orchestrated enterprise ecosystem? Talk to an EGO Digital expert →

Do you have any questions about Engineering & Infrastructure?

Ask Denis – AI Product Architect!

Recent Articles

Integrating LLM Responses into Real-Time UX: Performance Patterns

LLM integration in a real-time UI is no longer just a technical milestone — it is a product expectation. In modern frontend AI experiences, users do not judge quality only by the intelligence of responses. They judge by how quickly the interface reacts, how stable the interaction feels, and whether communication stays clear under uncertainty.This matters in every AI-powered product, but it becomes especially critical in emotionally sensitive contexts where interface behavior and message quality directly affect trust. The key lesson: model performance alone does not create a strong user experience. Real-time UX does.

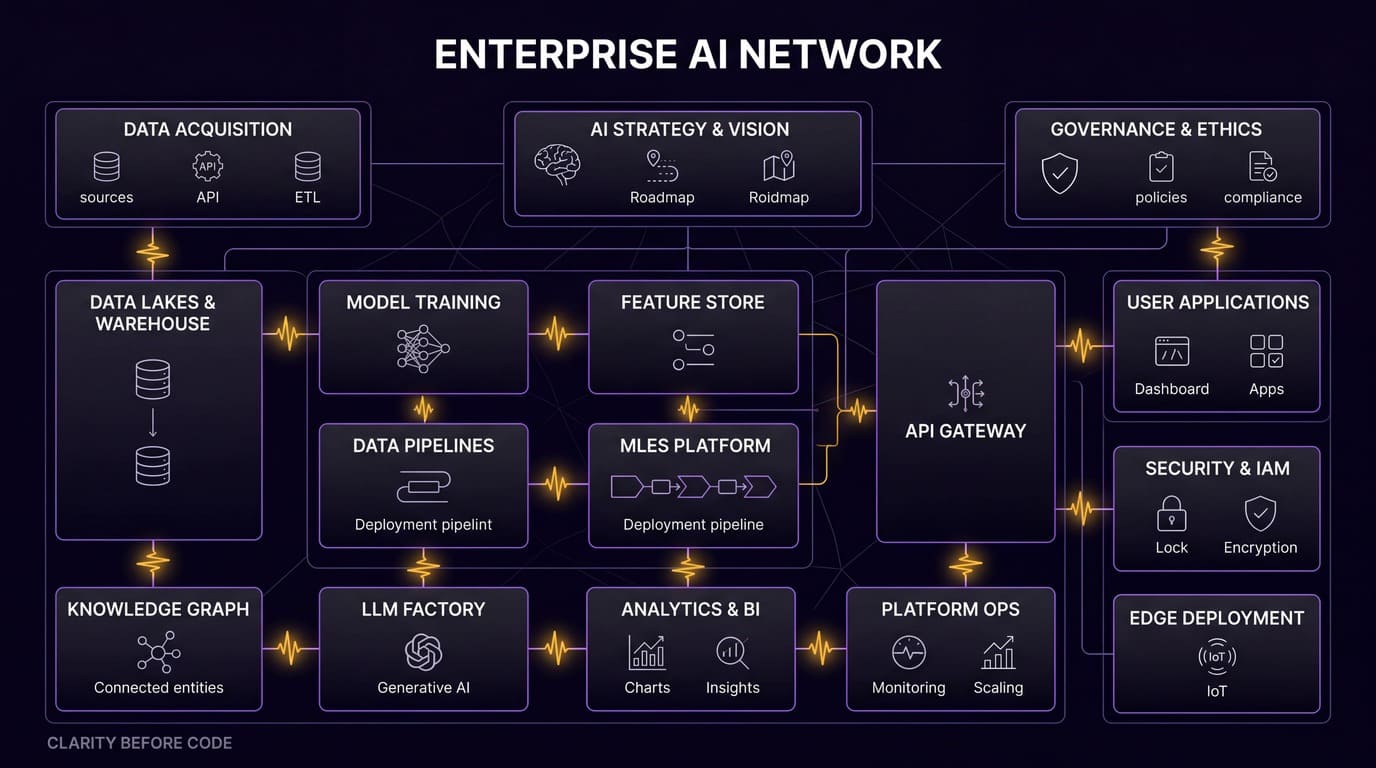

Enterprise AI Architecture: How We Connect 10+ Systems Without Breaking Anything

Enterprise AI integration is no longer about bolting a chatbot onto a legacy stack. It is about system architecture that lets autonomous agents plan, code, review, and ship — across Jira, GitHub, CI/CD, cloud runtimes, and multiple production apps — without a human babysitting every step. In this article I'll walk through the exact architecture we run at EGO Digital to connect 10+ systems, the automation loop that replaced our project managers' and mid-level engineers' routine work, and the lessons from putting it into production on four concurrent products.

Managing AI Development Projects: Timelines, Risks, and What's Different

Imagine you are three weeks away from a major product launch. The frontend is sleek, the APIs are lightning-fast, and the stakeholders are already popping champagne. But at the center of your architecture sits a "Black Box"—a machine learning model that worked perfectly in the lab but is currently returning 40% accuracy on real-world data.

THE FUTURE IS AI-NATIVE.

LET'S BUILD IT WITH YOU.

Partner with us to design and deploy AI-native systems.