Managing AI Development Projects: Timelines, Risks, and What's Different

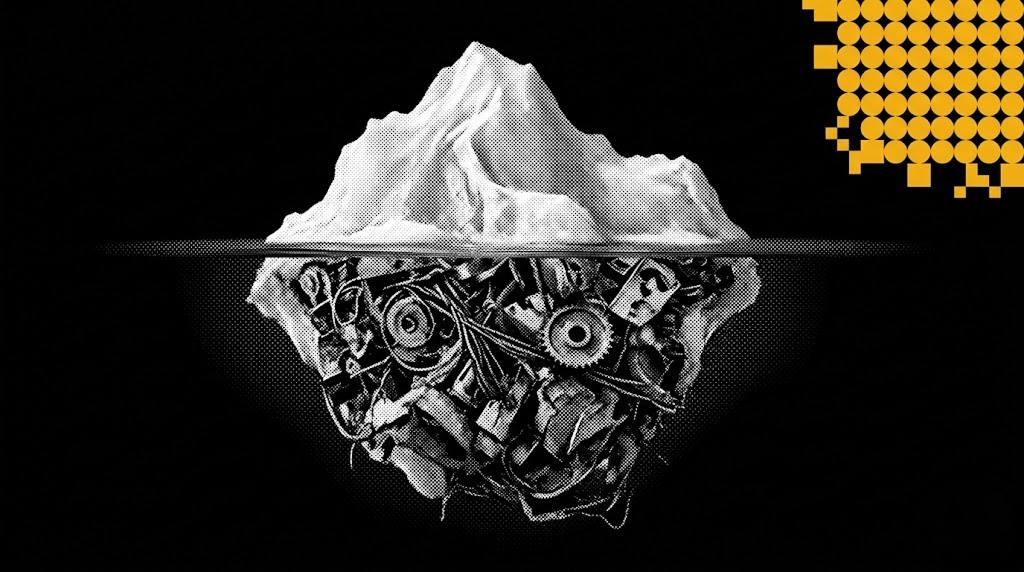

Imagine you are three weeks away from a major product launch. The frontend is sleek, the APIs are lightning-fast, and the stakeholders are already popping champagne. But at the center of your architecture sits a "Black Box"—a machine learning model that worked perfectly in the lab but is currently returning 40% accuracy on real-world data.

In traditional software, you’d hunt for a bug in the code. In AI, you are hunting for a ghost in the data.

This is the reality of AI development management. It is a high-stakes discipline that requires more than just a Jira board; it requires a deep understanding of how to manage project risks in an environment where binary logic is replaced by the laws of probability. If you are a Project Manager (PM) moving from standard dev to AI, you need to throw away the old playbook. You aren't building a clock; you're training a brain.

The Problem: Why Your "Done" Definition is Broken

The biggest trap in AI development management is the "Linear Progress Illusion." In a standard web app, if you finish the "Login" feature, it stays finished. In AI, you can spend three weeks "improving" a model only to find that it now performs worse on edge cases than when you started.

Most PMs run into these three walls:

- The Accuracy Plateau: The team hits 70% accuracy quickly. Then they spend the next four months trying to hit 80% and fail. That is a timeline killer.

- Data Entropies: You were promised a clean database, but what you actually got was a mess of duplicate entries, biased labels, and missing fields.

- The "Black Box" Excuse: When the model fails, the technical answer is often "it needs more training." Without a technical architect’s perspective, you can't tell if that’s true or if the team is just spinning their wheels.

The Solution: Architecting the "Safety Net"

At Ego Digital, we don’t treat AI as a standalone miracle. We treat it as a subsystem that must be governed by a rigid structural framework. To manage these project risks, you need to stop managing tasks and start managing constraints.

1. The "Data-First" Gate

Never let a single line of model code be written until the data has passed a "Stress Test." If the data isn't representative of the chaos of the real world, the project doesn't move forward. This gate prevents high-cost research loops that drain budgets before you even have a prototype.

2. The Modular Pivot (Don't Put All Your Eggs in the AI Basket)

Architect the system so the AI can be bypassed. We call this "Fail-Safe Logic." If the AI returns a confidence score below a certain threshold, the system should automatically pivot to a traditional, rule-based script. This ensures the product stays functional even when the "brain" is confused.

3. The "Worth It" Equation

As a PM, you need to know when to stop spending money on training. We use a simple logic to define the end-of-project:

$$Value \= (Gains\ from\ Accuracy) - (Cost\ of\ Compute + Engineering\ Hours)$$ If a 1% increase in accuracy takes $50,000 in engineering time but only saves the company $10,000 a year, stop training. You’re done. Ship it.

Real Example: The "Oracle" That Couldn't Predict a Storm

We were called in to rescue a project for a global logistics provider. They were six months overdue on an AI system meant to predict shipping delays. The PM was drowning because every time they "fixed" the model, a new real-world event (like a port strike) made it obsolete again.

The Risk: The team was trying to build a "Perfect Oracle" that understood the entire world.

The Technical Pivot:

We stopped the "research" and re-architected the system into layers:

- Layer 1: A simple model for "Normal" days (70% accuracy).

- Layer 2: A deterministic "News Filter." If a news scraper found keywords like "Strike" or "Hurricane," the system automatically added a 48-hour delay, bypassing the AI entirely.

- Layer 3: A "Human-in-the-Loop" trigger for high-value shipments where the AI was unsure.

The Result: By stopping the pursuit of a "perfect" model and building a "smart" architecture around a "good enough" model, we got the system live in three weeks. It wasn't "perfect AI," but it saved the company millions in customer support costs.

The Result: Shipping While Others Are "Researching"

When you master AI development management, your value as a leader changes. You stop being the person who asks for updates and start being the person who defines the path to ROI.

- No More Surprises: You catch data issues in the first week, not the last.

- Real Timelines: Your deadlines are based on business value, not scientific hope.

- Actual Growth: You deliver a working product that can be improved over time, rather than a perfect model that never leaves the lab.

AI is a tool, not a miracle. If you manage the architecture, you manage the success.

I’ve seen too many brilliant teams burn out because they were chasing a 99% accuracy rate that the business didn't even need. As a Chief Growth Architect, my job isn't to find the smartest algorithm - it's to find the shortest path to value. In the world of AI, your biggest asset isn't your compute power; it's your ability to say "this is good enough to ship." Don't let the complexity of the tech blind you to the simplicity of the goal: solving the user's problem.

Carmel Givon

Chief Growth Architect, Ego Digital

Tired of AI projects that never cross the finish line?

We specialize in building architectures that actually work in production. Let’s fix your roadmap and de-risk your build.

Do you have any questions about Engineering & Infrastructure?

Ask Carmel . – Chief Growth Architect!

Recent Articles

LLM Orchestration in Production: The Engineering Realities No Framework Prepares You For

Most teams shipping their first AI agent discover the same uncomfortable truth: the demo that wowed everyone in the all-hands meeting falls apart the moment real users touch it. LLM orchestration in production is not a harder version of prototyping — it is a fundamentally different discipline.

Integrating LLM Responses into Real-Time UX: Performance Patterns

LLM integration in a real-time UI is no longer just a technical milestone — it is a product expectation. In modern frontend AI experiences, users do not judge quality only by the intelligence of responses. They judge by how quickly the interface reacts, how stable the interaction feels, and whether communication stays clear under uncertainty.This matters in every AI-powered product, but it becomes especially critical in emotionally sensitive contexts where interface behavior and message quality directly affect trust. The key lesson: model performance alone does not create a strong user experience. Real-time UX does.

Enterprise AI Architecture: How We Connect 10+ Systems Without Breaking Anything

Enterprise AI integration is no longer about bolting a chatbot onto a legacy stack. It is about system architecture that lets autonomous agents plan, code, review, and ship — across Jira, GitHub, CI/CD, cloud runtimes, and multiple production apps — without a human babysitting every step. In this article I'll walk through the exact architecture we run at EGO Digital to connect 10+ systems, the automation loop that replaced our project managers' and mid-level engineers' routine work, and the lessons from putting it into production on four concurrent products.

THE FUTURE IS AI-NATIVE.

LET'S BUILD IT WITH YOU.

Partner with us to design and deploy AI-native systems.